Your SOUL.md is dialed in. Your agent has personality, rules, proactive behaviors. It greets you by name. It knows you prefer concise answers.

Then you say: "Remember that API key format we discussed yesterday?"

Blank stare. Generic response. Zero recall.

You've solved the identity problem. Now you have a memory problem — and it's the reason most OpenClaw agents plateau after week one. They can't learn because they can't remember.

How OpenClaw memory actually works

OpenClaw's memory system is radically simple: plain Markdown files on disk. No hidden state, no magic databases. The agent only "remembers" what gets written to files. That's the whole philosophy — and it's both its greatest strength (you can read and edit everything) and its greatest trap (if nothing gets written, nothing gets remembered).

The default workspace looks like this:

~/.openclaw/workspace/

├── MEMORY.md # Long-term curated memory

├── DREAMS.md # Dreaming sweep summaries (experimental)

└── memory/

├── 2026-04-13.md # Today's daily log

├── 2026-04-12.md # Yesterday's log

└── 2026-04-11.md # ...and so onToday's and yesterday's daily logs are loaded automatically into every session. MEMORY.md loads at the start of every private (DM) session. Everything else? Your agent has to actively search for it using memory_search.

That's the architecture. Simple. But most people use it wrong.

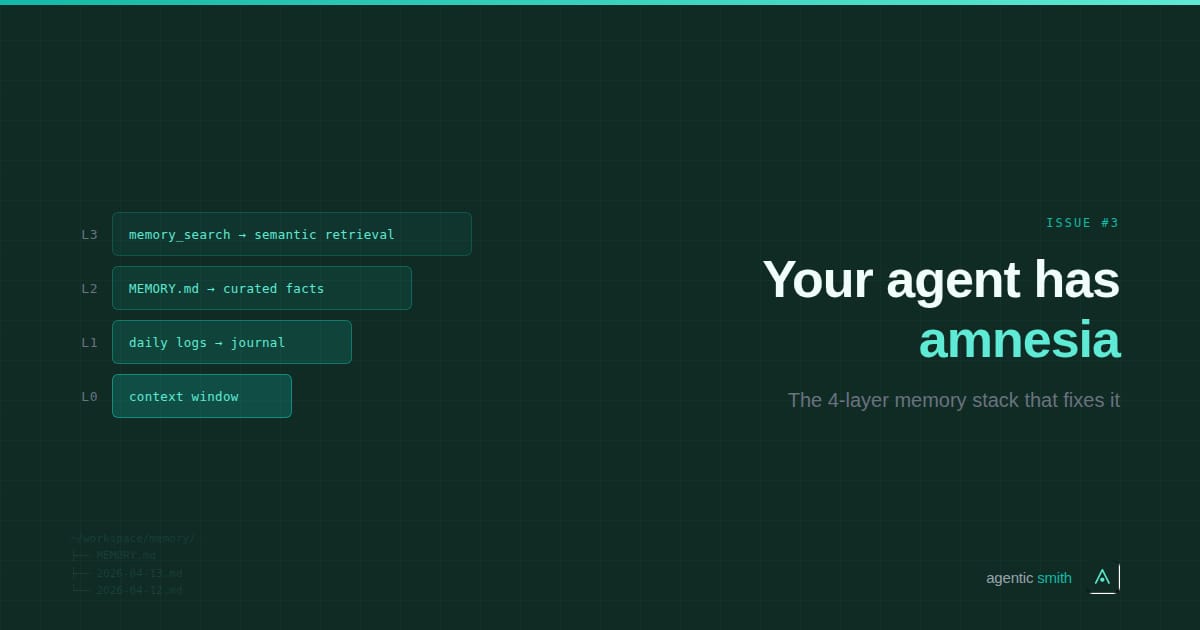

The L0–L3 memory stack

Think of OpenClaw memory as four layers, each handling a different timescale and purpose. The mistake most people make is trying to solve memory at a single layer — usually by cramming everything into MEMORY.md. That's like storing your entire database in a config file.

L0: Conversation context — the context window

This is what the model sees right now. It's automatic — the current conversation, system prompt, and loaded bootstrap files. It's also ephemeral. When the conversation ends (or compaction kicks in during long sessions), this context evaporates.

You don't configure L0, but you need to understand it. Compaction is the big risk here. During long conversations, OpenClaw summarizes older messages to free up tokens. Before compaction runs, a memory flush automatically saves important context to disk — but only if you haven't disabled it. Check that it's enabled:

// In ~/.openclaw/config.json

{

"compaction": {

"memoryFlush": {

"enabled": true

}

}

}If you've been losing context mid-conversation, this is probably why.

L1: Daily logs — your agent's journal

The memory/YYYY-MM-DD.md files are append-only logs of what happened each day. Decisions made, tasks completed, things learned. OpenClaw creates these automatically, but the quality depends on your agent knowing what's worth writing down.

Add this to your AGENTS.md:

# Memory Rules

- Write significant decisions, preferences, and task outcomes

to today's daily log immediately — don't wait for session end.

- When I share a preference, API key, project detail, or

recurring instruction, write it to the daily log AND flag

it for MEMORY.md promotion.

- Before acting on any assumption about past context,

run memory_search first. Check your notes, don't guess.That last rule — "search memory before acting" — is the single highest-ROI memory instruction you can add. Without it, your agent guesses from whatever happens to be in L0 context instead of checking its notes.

L2: MEMORY.md — curated long-term memory

This is the working memory layer. Durable facts, preferences, key decisions. It loads into every DM session, so it has the same token budget pressure as SOUL.md — every character costs tokens on every interaction.

The problem: MEMORY.md tends to grow until it eats your token budget or gets truncated. People dump meeting notes, one-off task details, and transient project context here. That's L1 material, not L2.

What belongs in MEMORY.md: things that are true over time. Your coding preferences. Your team members' names and roles. How you like your morning briefing structured. The deployment process for your main project. Things the agent needs to recall instantly, every session, without searching.

What doesn't: yesterday's meeting notes. One-off debugging sessions. Temporary project deadlines. That stuff lives in daily logs and gets retrieved via search when relevant.

L3: Semantic search — retrieval on demand

memory_search is the tool that makes the stack work at scale. Instead of loading every memory into context (which would blow your token budget in a week), the agent queries a search index and pulls in only what's relevant.

Out of the box, OpenClaw uses SQLite-backed keyword search (BM25). Fast and zero-config, but it won't find "the API pricing decision" if you stored it as "we chose the $29/month tier." For semantic matching, you need to configure an embedding provider. If you already have an OpenAI, Gemini, Voyage, or Mistral API key configured, hybrid search (keyword + vector) enables automatically.

Verify it's working:

openclaw memory status --deepIf hybrid search shows as inactive, add your embedding provider key to your environment variables and reindex:

openclaw memory index --verboseThe three memory failure modes

1. The write gap. Your agent has interesting conversations but never writes anything to disk. Memory flush catches some of this before compaction, but it's a safety net — not a strategy. Fix: the AGENTS.md rules above that make writing explicit. "Write it down, then act on it."

2. The MEMORY.md blob. Everything dumped into long-term memory. File grows to 10,000+ characters. Token budget explodes. Agent starts getting truncated context. Fix: keep MEMORY.md under 4,000 characters. Everything else lives in daily logs and gets retrieved via memory_search. If it's not needed every session, it doesn't belong in MEMORY.md.

3. The search gap. Agent never uses memory_search. It either hallucinates past context or says "I don't have information about that" when the answer is sitting in last Tuesday's log. Fix: add the explicit "search before acting" rule to AGENTS.md. Make retrieval mandatory, not optional.

Dreaming: the new automated layer

OpenClaw 2026.4.9 shipped "Dreaming" — a three-phase background consolidation system that automatically promotes strong signals from daily logs into MEMORY.md. It runs on a cron schedule (default: 3 AM daily), scores candidates across six dimensions (relevance, frequency, query diversity, recency, consolidation, conceptual richness), and only promotes entries that pass strict thresholds.

It's opt-in and disabled by default. Enable it:

/dreaming onThe Dream Diary (DREAMS.md) shows you what got promoted, what got discarded, and why. Review it weekly. A typical cycle looks like:

## Dream Cycle — 2026-04-13 03:00 UTC

### Promoted to Long-Term Memory

- User prefers TypeScript over JavaScript (score: 0.87)

- Deployment uses PM2 reload, never stop+start (score: 0.81)

### Discarded (below threshold)

- One-off weather question (score: 0.12)

- Temporary debugging session (score: 0.09)Dreaming is essentially an automated memory janitor. But it's early — review what it promotes and manually edit MEMORY.md if it gets something wrong.

The compound effect

After a week with the full L0–L3 stack active: ask your agent "Review your MEMORY.md. Remove anything outdated, consolidate duplicates, and suggest additions based on this week's daily logs."

Same iterative refinement loop as SOUL.md — compound improvement over time. The agents that remember well are the agents that get useful. And the memory stack is what makes that possible.

Production-ready MEMORY.md template

Copy this into ~/.openclaw/workspace/MEMORY.md. Replace the [BRACKETED] sections. Your agent will load it at the start of every DM session.

# Memory — [YOUR NAME]'s Agent

Last reviewed: [DATE]

## Preferences

- Communication: [concise / detailed / match-my-energy]

- Code style: [language preferences, formatting conventions]

- Scheduling: [timezone, working hours, meeting preferences]

- Tools: [preferred apps, services, workflows]

## Key People

- [Name] — [role/relationship]. [One relevant detail].

- [Name] — [role/relationship]. [One relevant detail].

## Active Projects

- [Project name]: [One-line status]. [Key constraint or deadline].

- [Project name]: [One-line status]. [Key constraint or deadline].

## Recurring Workflows

- Morning briefing: [what to include, what time]

- [Workflow]: [trigger and expected behavior]

- [Workflow]: [trigger and expected behavior]

## Learned Rules (agent-discovered)

<!-- This section grows over time as the agent learns your patterns.

Ask your agent weekly: "What patterns have you noticed that

should be added to MEMORY.md?" -->Keep this under 4,000 characters. If a section is growing beyond three or four entries, it's a sign that detail should live in daily logs instead — retrievable via memory_search, not loaded into every prompt.

Pair this with the AGENTS.md memory rules from The Forge above. The template tells the agent what to remember. The rules tell it how to manage memory over time.

Skill review: MemPalace

What it does: A local-first semantic memory system that organizes memories into a spatial hierarchy — Wings (people, projects, knowledge), Halls (sub-categories), and Closets (summaries). Uses ChromaDB for vector storage and SQLite for a knowledge graph. Everything stays on your machine.

The headline number: 96.6% recall on the LongMemEval benchmark in verbatim mode, with zero external API calls. The spatial structure alone provides a 34% retrieval boost over flat search — before any semantic matching runs.

Setup difficulty: Medium-high. Requires Python, ChromaDB, and either the MCP server or the OpenClaw native plugin. Budget 30 minutes for a clean install. The MCP route is documented; the native OpenClaw skill on ClawHub is new (published this week) and still rough around the edges.

Verdict: MemPalace solves a real problem — as your memory grows past what MEMORY.md and flat daily logs can handle, structure starts to matter more than search quality. The "palace" metaphor isn't just branding; organizing memories into Wings and Halls genuinely improves retrieval because the structure narrows the search space before vector similarity even kicks in.

The catch: it's a second memory system running alongside OpenClaw's native one. You're now managing two stores, two indexing processes, two potential failure points. For most builders, OpenClaw's built-in memory stack (daily logs + MEMORY.md + memory_search with hybrid mode) is enough for the first few months. MemPalace becomes worth it when you're running long-lived agents across multiple projects and cross-project memory bleed becomes a problem.

Watch out for: No official OpenClaw integration yet — there's a PR pending and a community skill on ClawHub, but neither is battle-tested. The competing tools Mem0 and MemOS Cloud already have smoother OpenClaw integrations if you're willing to go cloud-hosted.

Rating: Worth watching. Install it if you're hitting memory scale limits. Skip it if OpenClaw's native memory stack still handles your workload — revisit in a month.

Memory is the moat

Every builder has access to the same models. Claude, GPT, Gemini, Llama — pick your engine. The playing field is level.

What isn't level: how well your agent remembers you.

An agent that recalls your preferences, your team, your project context, your communication style — after four weeks of daily use — is a fundamentally different tool than one that starts fresh every session. And that difference compounds. The agent that remembers well gets better instructions, catches more edge cases, and anticipates your needs in ways a blank-slate agent never will.

Issue #1 was about identity. Issue #3 is about memory. Together, they're the foundation everything else is built on. Next week: security hardening — because an agent that remembers everything about you is only useful if nobody else can access it.

See you next week.

— Michael